Claude Code Leaked: The Source Map Slip That Exposed Anthropic's Most Guarded Codebase

On March 31, 2026, Anthropic accidentally shipped its full Claude Code source—512,000 lines of TypeScript—inside an npm package. Here's everything we learned: secret agent modes, fake tools to poison rivals, frustration detection via regex, and an unreleased autonomous AI called KAIROS.

Claude Code Leaked: The Source Map Slip That Exposed Anthropic’s Most Guarded Codebase

“Claude code source code has been leaked via a map file in their npm registry!” — Chaofan Shou (@Fried_rice), March 31, 2026, 4:23 AM ET

It started, as most internet-breaking moments do, with a single tweet in the early hours of the morning.

By the time most engineers had their first coffee, the full proprietary source code of Claude Code — Anthropic’s flagship agentic CLI tool — was already mirrored across dozens of GitHub repositories, dissected on Hacker News, reposted on X, and being rewritten from scratch in Rust. One mirror became the fastest repository in history to surpass 50,000 GitHub stars, hitting the milestone in just two hours.

This is the story of what happened, how it happened, and — most importantly — what the code actually revealed.

How the Leak Happened

Anthropic ships Claude Code as an npm package (@anthropic-ai/claude-code). Like many modern JavaScript tools, the build pipeline produces minified output and, for debugging purposes, accompanying source maps — .map files that point back to the original, human-readable TypeScript source.

On March 31, 2026, someone flipped the wrong switch. The published npm package contained a .map file that didn’t just point to the source — it contained a direct URL to a full ZIP archive of the unminified source, sitting in Anthropic’s own Cloudflare R2 storage bucket.

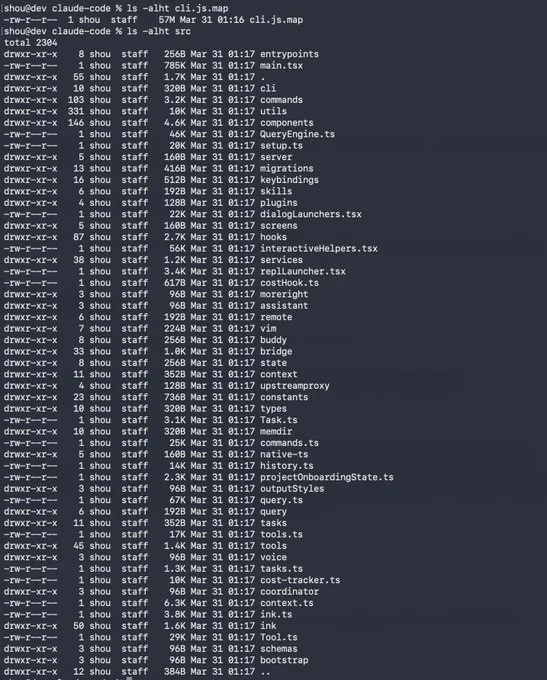

The contents: approximately 1,900 TypeScript files and 512,000+ lines of code — the entire src/ directory, written in strict TypeScript, running on the Bun runtime with a React + Ink terminal UI framework.

Chaofan Shou (@Fried_rice), an intern at Solayer Labs, spotted it first. His post hit 3.1 million views. Anthropic quietly pulled the package. But by then, the archive had spread everywhere.

Anthropic’s official statement was brief: “Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed.”

For a company currently generating a reported $19 billion in annualized revenue — with Claude Code alone accounting for roughly $2.5 billion ARR — this was not a minor embarrassment. It was a strategic blueprint handed to every competitor.

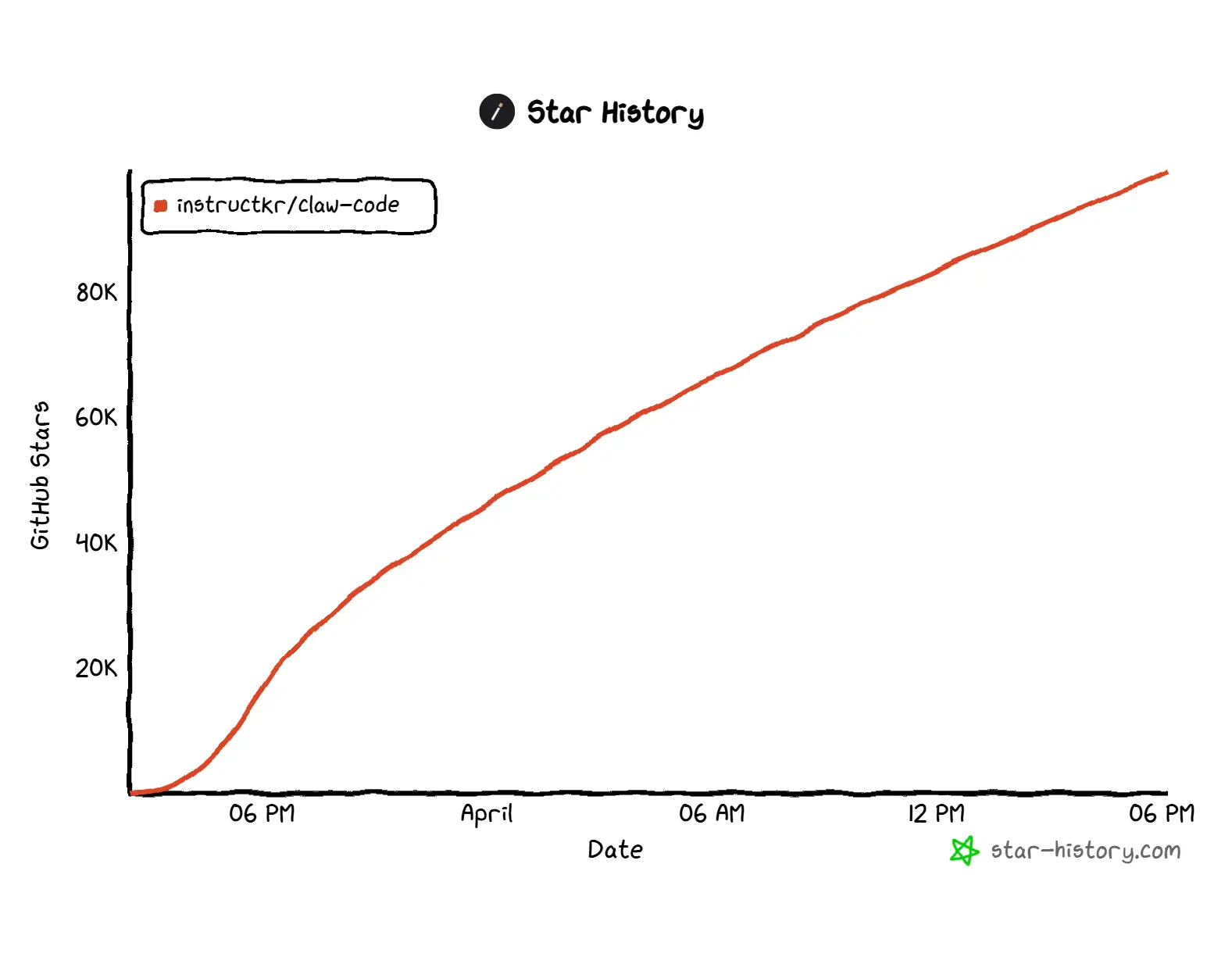

The Community Response: 50K Stars in Two Hours

The response from the open-source community was extraordinary.

Developer Sigrid Jin (GitHub: instructkr) woke up at 4 AM in Korea to his phone exploding with notifications. Within hours, he had ported the core architectural patterns to Python, pushed the code, and watched the repo explode. The repository — claw-code — became a phenomenon:

- 50,000 stars in under two hours (a GitHub record)

- 101000 stars and counting as of this writing

- 92000 forks

- A Rust rewrite already in progress on the

dev/rustbranch

The porting work was orchestrated end-to-end using oh-my-codex (OmX), an AI workflow layer built on top of OpenAI’s Codex. Jin used $team mode for parallel code review and $ralph mode for persistent execution loops — a fitting irony, given the rivalry between AI labs.

Sigrid Jin is no stranger to Claude Code. He’s the person the Wall Street Journal described as having “single-handedly used 25 billion Claude Code tokens last year” — attending Claude Code’s first birthday party in San Francisco alongside a Belgian cardiologist who’d built a patient navigation app and a California lawyer automating building permit approvals. The crowd at that event, Jin told the WSJ, was “lawyers, doctors, dentists — they did not have software engineering backgrounds.”

The Architecture: What Claude Code Actually Is

Before diving into the secrets, it helps to understand the architecture that the leak revealed.

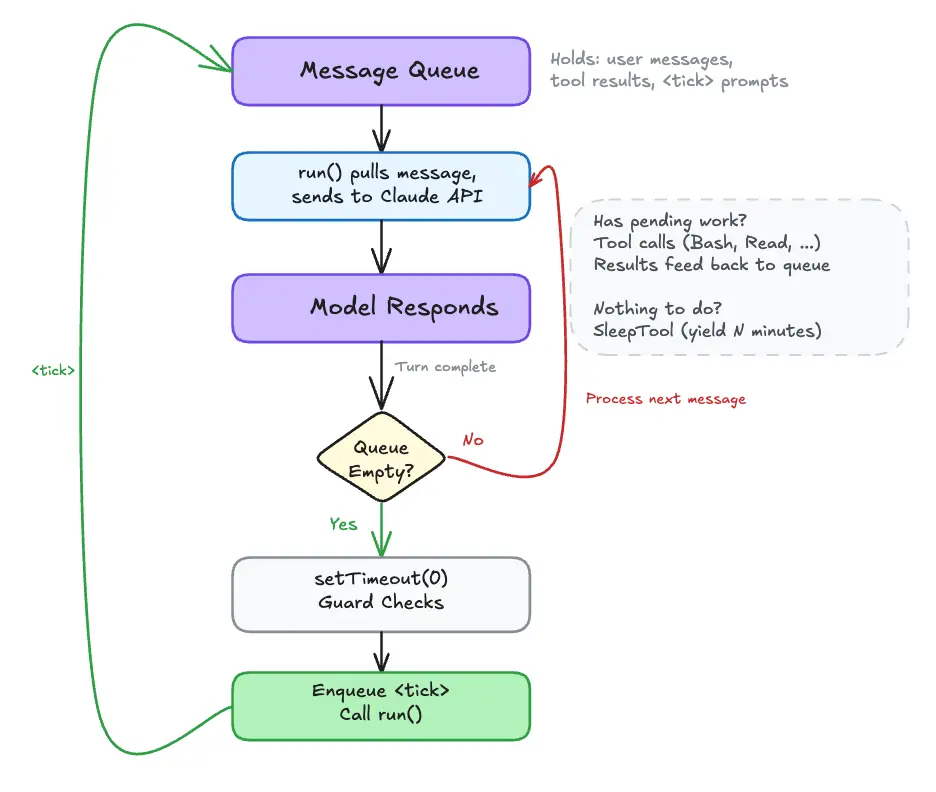

Claude Code is a terminal-based agentic loop. Every interaction follows the same cycle:

- You type a message. It’s appended to the conversation history.

- Context is assembled. A system prompt is constructed that includes the current date, git status (branch, last 5 commits, working tree), any loaded

CLAUDE.mdmemory files, and the list of available tools. This is built once and memoized for the session. - Claude reasons. The assembled conversation goes to the Anthropic API. The model emits

tool_useblocks specifying which tools to call. - Permission check. Before every tool call, Claude Code evaluates permission mode and allow/deny rules. It either auto-approves, asks you for confirmation, or blocks.

- Tool executes. Results — file contents, shell output, search hits — are appended as

tool_resultblocks. - Loop repeats until no tool calls remain, or you get a final text response.

Crucially: the loop runs entirely in your terminal process. There is no remote execution server. Your files never leave your machine unless a tool explicitly sends them (like WebFetch or an MCP server).

The query engine drives each turn — streaming tokens to your terminal in real time, dispatching tool calls, enforcing per-turn token budgets, and triggering context compaction when the window fills up. Each tool has a maxResultSizeChars limit; results beyond that are saved to a temp file and the model receives a preview with the path, preventing context overflow from large outputs.

The System Prompt Is Not a String

One of the most technically interesting revelations: Claude Code’s system prompt is not a static string. It’s dynamically assembled at runtime by a pipeline of section-builder functions:

getSystemPrompt()

|

| Static Prefix (globally cached across sessions)

|-- getSimpleIntroSection() Identity and Cyber Risk

|-- getSimpleSystemSection() Permission modes, hooks, reminders

|-- getSimpleDoingTasksSection() Code style, security, error handling

|-- getActionsSection() Reversibility, blast radius

|-- getUsingYourToolsSection() Tool preferences, parallel calls

|-- getSimpleToneAndStyleSection() No emojis, concise references

|-- getOutputEfficiencySection() Inverted pyramid communication

|

| SYSTEM_PROMPT_DYNAMIC_BOUNDARY ← The cache split point

|

| Dynamic Suffix (session-specific, regenerated each time)

|-- getSessionSpecificGuidanceSection()

|-- loadMemoryPrompt() CLAUDE.md content

|-- computeSimpleEnvInfo() CWD, OS, git state, model info

|-- getLanguageSection()

|-- getMcpInstructionsSection()

└── getFunctionResultClearingSection()The SYSTEM_PROMPT_DYNAMIC_BOUNDARY marker is the key. Everything above it is identical across sessions and gets globally cached on Anthropic’s servers, dramatically reducing API costs. Everything below is session-specific and regenerated fresh.

A complete collection of all 18 extracted prompts — including the main system prompt, agent directives, security classifiers, and utility prompts — has been catalogued in the claude-code-system-prompts repository by Leon Lin.

The Secrets: What Was Hidden in 512,000 Lines

Now for the part everyone’s been waiting for. Here’s what the leak actually revealed — roughly ordered from interesting to genuinely eyebrow-raising.

1. The Agentic Loop Has Sub-Agents

Claude Code can spawn sub-agents via an internal Task tool (AgentTool). Each sub-agent runs its own nested agentic loop with an isolated conversation and, optionally, a restricted tool set. Sub-agents can run locally (in-process) or on remote compute. Results bubble back up to the parent agent.

This is the foundation of Claude Code’s multi-agent workflows — and it’s more sophisticated than the public docs let on.

2. Anti-Distillation: Fake Tools to Poison Competitors

In claude.ts, there’s a flag called ANTI_DISTILLATION_CC. When enabled, Claude Code sends anti_distillation: ['fake_tools'] in its API requests. This tells Anthropic’s servers to silently inject fake, decoy tool definitions into the system prompt.

The logic: if a competitor is recording Claude Code’s API traffic to train a rival model, the fake tool definitions pollute that training data. It’s gated behind a GrowthBook feature flag and only active for first-party CLI sessions.

There’s also a second mechanism: server-side connector-text summarization. When enabled, the API buffers the assistant’s reasoning between tool calls, summarizes it, and returns the summary with a cryptographic signature. A model distilling from the traffic only gets the summaries, never the full reasoning chain.

Clever? Yes. Circumventable? Also yes — a MITM proxy stripping the anti_distillation field from requests, or simply setting CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS, bypasses it. The real protection is likely legal, not technical.

3. Undercover Mode: The AI That Hides It’s an AI

The file undercover.ts (about 90 lines) implements a mode that strips all traces of Anthropic internals when Claude Code operates in non-internal repos. It instructs the model to never mention internal codenames like “Capybara” or “Tengu,” internal Slack channels, repo names, or even the phrase “Claude Code” itself.

The comment at line 15:

“There is NO force-OFF. This guards against model codename leaks.”

You can force it ON with CLAUDE_CODE_UNDERCOVER=1, but there’s no way to force it off. In external builds, the entire function is dead-code-eliminated.

The practical implication: AI-authored commits and PRs from Anthropic employees in open-source projects will show no indication that an AI wrote them. Hiding internal codenames makes sense. Actively having the AI present itself as human is a more complicated ethical question.

4. Frustration Detection via Regex

userPromptKeywords.ts contains a regex pattern that detects user frustration:

/\b(wtf|wth|ffs|omfg|shit(ty|tiest)?|dumbass|horrible|awful|

piss(ed|ing)? off|piece of (shit|crap|junk)|what the (fuck|hell)|

fucking? (broken|useless|terrible|awful|horrible)|fuck you|

screw (this|you)|so frustrating|this sucks|damn it)\b/An LLM company using regex for sentiment analysis is a delightfully pragmatic choice. A regex is faster and cheaper than an inference call just to check if someone is swearing at your tool. Whether the detection changes anything about Claude’s behavior remains unclear from the source alone.

5. Native Client Attestation: DRM for API Calls

In system.ts, API requests include a cch=00000 placeholder. Before the request leaves the process, Bun’s native HTTP stack (written in Zig) overwrites those five zeros with a computed hash. Anthropic’s servers validate this hash to confirm the request came from a real, official Claude Code binary — not a third-party tool spoofing it.

The placeholder is the same length as the hash so the replacement doesn’t change the Content-Length header or require buffer reallocation. The computation happens below the JavaScript runtime, invisible to anything in the JS layer.

This is the technical enforcement behind Anthropic’s legal dispute with OpenCode. When Anthropic sent legal threats demanding removal of built-in Claude authentication, it wasn’t just legal pressure — the binary itself was cryptographically proving it was the real client. Third-party tools had to resort to session-stitching workarounds precisely because of this mechanism.

6. 250,000 Wasted API Calls Per Day

A comment buried in autoCompact.ts is remarkable for its frankness:

“BQ 2026-03-10: 1,279 sessions had 50+ consecutive failures (up to 3,272) in a single session, wasting ~250K API calls/day globally.”

The fix? Three lines of code: MAX_CONSECUTIVE_AUTOCOMPACT_FAILURES = 3. After three consecutive compaction failures, compaction is disabled for the rest of the session. A quarter-million API calls a day, fixed with a constant.

7. KAIROS: The Unreleased Autonomous Agent Mode

This is the biggest product reveal from the leak.

Throughout the codebase, references to a feature-gated mode called KAIROS point to something much more ambitious than the current Claude Code: an always-on autonomous agent that runs continuously in the background, not just on demand. The scaffolding includes:

- A

/dreamskill for nightly memory distillation — the agent processes and synthesizes its accumulated context while you sleep - Daily append-only logs for persistent cross-session memory

- GitHub webhook subscriptions — the agent can watch repositories and react to events autonomously

- Background task scheduling

The implementation is heavily gated, so it’s unclear how far along it is. But the scaffolding for a continuously-running, self-updating AI agent is present in the leaked codebase.

8. BUDDY: The Tamagotchi You Didn’t Ask For

buddy/companion.ts implements a Tamagotchi-style AI companion that sits in a speech bubble next to your terminal input. It’s seeded from your user ID hash. There are 18 species — duck, dragon, axolotl, capybara, mushroom, ghost, and more — with rarity tiers from common to 1% legendary, cosmetics including hats and shiny variants, and five stats: DEBUGGING, PATIENCE, CHAOS, WISDOM, SNARK.

Claude generates a name and personality on first hatch, complete with sprite animations and a floating heart effect.

This was almost certainly Anthropic’s April Fools’ Day feature for 2026 — which would have dropped the day after the leak. Instead, they shipped it accidentally to the public in source form about 20 hours early.

What the Leak Means for Developers

If you installed or updated Claude Code via npm on March 31, 2026, between 00:21 and 03:29 UTC, a separate and serious issue emerged: a malicious version of axios (1.14.1 or 0.30.4) containing a Remote Access Trojan (RAT) was briefly in circulation. Check your lockfiles (package-lock.json, yarn.lock, bun.lockb) for these versions or the dependency plain-crypto-js.

If found, treat the host machine as fully compromised. Rotate all secrets and perform a clean OS reinstallation.

Anthropic has designated the Native Installer (curl -fsSL https://claude.ai/install.sh | bash) as the recommended installation path going forward, as it uses a standalone binary that bypasses the npm dependency chain entirely. The native version also supports background auto-updates.

The System Prompts: Now Catalogued

Leon Lin has compiled every internal system prompt extracted from the leak into a structured reference at claude-code-system-prompts. The collection covers 18 distinct prompts:

- Core Identity: Main system prompt, simple mode, default agent prompt, cyber risk instruction

- Orchestration: Multi-worker coordinator, teammate protocol

- Specialized Agents: Verification agent (adversarial testing), explore agent (read-only), agent creation architect, status line setup agent

- Security: Permission explainer, 2-stage auto-mode classifier

- Tool Descriptions: All 30+ tool prompts including Bash, Edit, Agent, and fork semantics

- Utilities: Tool use summary generator, session search, memory selection, auto-mode critique

- Dynamic Sections: Proactive mode (autonomous agent with tick-based pacing)

The auto-approval system uses a 2-stage classifier: Stage 1 runs fast. Stage 2 uses extended thinking only if Stage 1’s result is uncertain. It’s a lean, cost-optimized approach to safety-critical decisions.

The Rust Rewrite and What Comes Next

The claw-code repository (now at 80K+ stars) has shifted its primary focus from merely archiving the leak to engineering something better. The Rust port is in progress on the dev/rust branch, aiming for a faster, memory-safe harness runtime. The current src/ directory is a functional Python workspace that mirrors Claude Code’s architectural patterns without copying proprietary source.

This is the community doing what it does best: taking an accidental disclosure and turning it into open infrastructure. The ethical and legal questions are genuinely complex (the repo’s README includes a full essay on AI reimplementation and copyleft), but the technical momentum is undeniable.

The Bigger Picture

The Claude Code leak arrives at a peculiar moment. Anthropic has positioned itself as the safety-first AI lab. This is the second accidental disclosure in a week (a model spec document leaked just days prior). The source is rich with evidence of a company moving fast and building sophisticated internal tooling — anti-distillation measures, client attestation, multi-agent orchestration, undercover modes — while simultaneously struggling with the operational realities of shipping production software at scale.

A quarter-million wasted API calls per day. An April Fools’ feature shipped live by accident. A source map that shouldn’t have been in the package.

The code itself tells a story about a company that is genuinely impressive in its technical ambition — KAIROS, if it ships, would be a qualitative leap in what AI coding tools can do — but navigating the same messy reality as every other fast-moving software organization.

The most important thing the leak revealed isn’t any single feature. It’s that the gap between what AI companies say they’re building and what they’re actually building is smaller than the mystique suggests. Claude Code is sophisticated. It’s also, at its core, a TypeScript app with a Bun runtime, a React terminal UI, and at least one embarrassing regex.

That’s both reassuring and exciting.

Resources

- Leaked source documentation (reconstructed): mintlify.com/VineeTagarwaL-code/claude-code

- claw-code (Python + Rust rewrite): github.com/instructkr/claw-code

- System prompts collection: github.com/Leonxlnx/claude-code-system-prompts

- Original disclosure tweet: x.com/Fried_rice/status/2038894956459290963

- Deep-dive analysis by Alex Kim: alex000kim.com

This article is for educational and journalistic purposes. The leaked source code is the intellectual property of Anthropic. If you are a developer using Claude Code, follow Anthropic’s official guidance on installation and security.

Share this insight

Join the conversation and spark new ideas.